by Chris

Watching and reacting to the Buscemi-Lawrence video

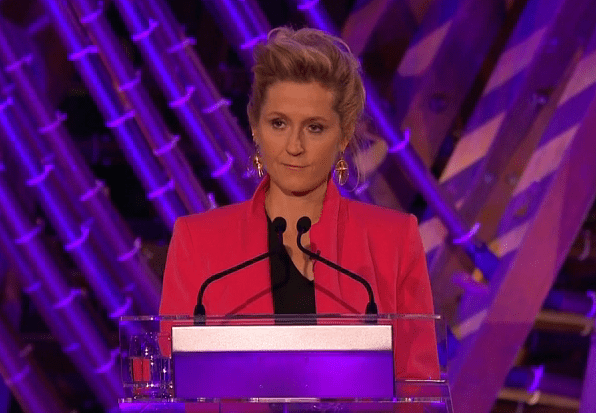

At first glance it looks like Steve Buscemi has expanded his wardrobe, donning a sparkling, red dress at an awards Q&A event. Once he speaks, or rather she speaks you realise that the voice coming from his mouth is not his own. It’s the voice of Jennifer Lawrence.

My smirk of seeing Buscemi in a dress is replaced by confusion as I realise what I am watching. It’s Buscemi’s face superimposed onto Lawrence’s body. Actually it’s more than that. Online, we are accustomed to frankenstein images of faces cropped on to different bodies.

Only there’s nothing crude about this creation. Buscemi’s face is moulded onto Lawrence’s body, so that her gestures become his own. Head, eye, mouth movements almost imperceptible to the eye align perfectly. It’s such a close recreation that without prior knowledge of both actors; recognition of their image and distinctive voice one would find it difficult to know that this event has not occurred as shown in front of live cameras. Indeed, it was dreamt and created in the mind of a programmer.

The depth of the fakery produced by this technology is apt given its name: a deepfake.

How are deepfakes made?

Actually the name ‘deepfake’ comes from the deep learning algorithm used to create these fake moving images.

To make a deepfake one requires three ingredients. A computer, a working knowledge of a neural network, known as a ‘generative adversarial network’ or GAN, and video footage of a person or persons to train the network. Alternatively, it is just as easy to pay a programmer to create a deepfake for you, for little cost.

Video footage is fed into the GAN which is actually composed of two neural networks. The first network tries to make the video footage align as closely as possible, superimposing one face onto another body and matching intricate facial details such as lip movement. The second network identifies mistakes in the output, acting as an adversary of the first network, teaching it and improving its final product. Hence over time, even without high-quality video footage, the first network is able to produce hyper realistic creations such as the Buscemi-Lawrence video.

At present two types of deepfakes have been shared online, with the potential for more variations. The first is a basic face swap, as seen in the Buscemi-Lawrence video, and fake celebrity porngraphic videos. The second is a voice swap.

In an awareness-raising campaign, Buzzfeed commissioned two deepfake videos of Barack Obama. Both videos show Obama speaking to the camera from the Oval Office in what looks like a public address. In the first video his voice is replaced by that of comedian and impersonator, Jordan Peele. In the second, his voice is replaced by his own, old audio taken from footage that was filmed decades earlier while Obama was a student, in a much different role and setting.

What is scary is that both deepfakes are almost seamless in quality. Obama’s mouth and facial expressions move to form the words spoken by Peele, or those spoken by him as a student in late 70s. The only evidence of the deception is the low fidelity of the audio.

Deepfakes may become even more realistic with the arrival of digital voice impersonation, brought by Montreal startup LyreBird. Lyrebird’s machine learning algorithm requires a small collection of words read aloud to train its neural network to produce an accurate model of your voice, which can form new sentences on its own.

It works by breaking down the audio of your voice into phonemes, linguistic building blocks, and then feeding them into a GAN, which again improves the fidelity of voice output over time, as it trains against itself, learns and improves. An hour later Lyrebird’s algorithm can speak in your voice. Making statements you would not dream of speaking out loud.

The potential of deepfakes

The silliness of watching famous actors switch faces at award ceremonies masks the incredible power of the technology. Deepfakes gives someone the power to manipulate another person’s image and even voice when combined with digital voice impersonation. As with any powerful tool its potential is great; for good and for harm.

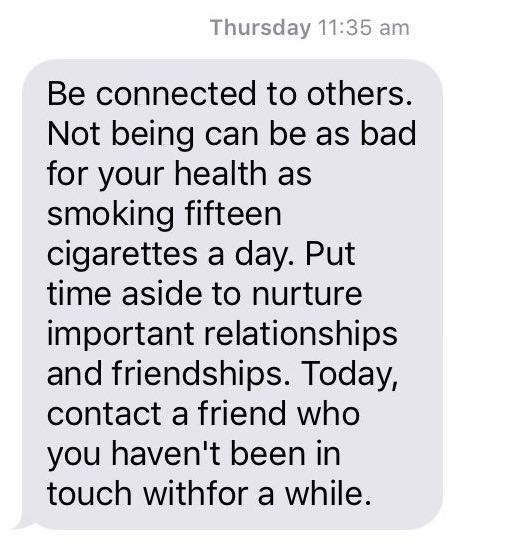

The benefits of deepfake technology is already realised in computer graphics where it improves the look of games and special effects. It’s cheap and accessible, offering studio level graphics to a wider number of people. Image and voice manipulation may benefit people that may face online discrimination due to the way they look or speak.

Admittedly deepfakes already have reputation for harm. They first surfaced online in pornographic videos where the faces of female celebrities were superimposed on to the bodies of pornographic actors. In a December 2018 article for the Washington Post Scarlett Johansson, a victim of these malicious deepfakes, voiced her concern for those less famous.

Johansson pointed out that her fame provided a level of protection not afforded to other people. Johansson makes an important point. When you are famous you are accustomed to your image and voice being manipulated, often for comedic effect. The public is also accustomed to the image and voice manipulation of celebrity, hence we take a critical eye to any footage linked to a celebrity questioning its truth.

Would we use the same critical eye when watching our favourite YouTuber or a video of our friend or family member.

It would be easy for someone to create a malicious video of you making hateful statement or acting in illegal ways. All they would require is some video and audio of you speaking into a camera. The video sharing platform, Youtube, is a veritable mine for the future manufacture of deepfakes. Millions of people around the world have uploaded hours of video and audio content freely accessible to all. Once another person has control of your image and voice, their power to make you appear to act in their wishes makes blackmail a serious risk. Malicious intent to harm your public image, through the release of embarrassing or illegal material is already of problem more easy facilitated by technology.

The use of deepfake technology enable blackmail as people are targeted, embarrassing or illegal behaviour is modelled and money is exchanged.

On a fundamental level once images and audio may be manipulated to the extent shown in deepfakes, technological deception is no longer perceptible by the human brain, seeing is no longer believing, at least online. Online is truth will die. Every video will be tainted with the hue of deepfake technology, precisely because we will not be able to decipher if it’s a real or not.

So what are we to do?

Deepfakes are trained on video footage, so be aware of sharing your image and voice online, to reduce easy access to training data. There’s little point in giving the sculptor more clay. Still for many of us our image and voice already has a place online in social media accounts or video sharing platforms such as Youtube. For many, our livelihoods, depend on our online public image. To prevent the most malicious impact of deepfakes to enable blackmail, enable the power of fame, by sharing video you film as widely as possible. Avoid giving access to your image in video to another party. Always keep a copy for yourself to be able to challenge any claims made by other parties.

Many online platforms have taken a hard stance on deepfakes, many porn sharing sites actively take them down and Reddit has banned communities that produce them.

There are also active communities of researchers trying to create algorithms to easily identify deepfakes, taking away their power to deceive the public.

Right now, it’s important for Government to raise awareness of the effectiveness of deepfakes to deceive the public as the technology improves in sophistication.

Currently there’s an online battle for truth, as think tanks and news agencies attempt to counter fake news stories that have become ubiquitous in certain communities online. Once fake new is supported by deepfake productions offering irrefutable evidence, the power of truth in other sources all but dies.

That is why the Buscemi-Lawrence video is powerful. It presents the extent of the deception. How we can all be deceived, as technology begins to surpass even our human senses / as technology begins to surpass our ability to distinguish what is real and what is not.